For the first time, on the 15th May 2018, Facebook published data in relation to the number of enforcements they have made to tackle spam and fake accounts on the platform.

The report covers violation numbers on content such as graphic violence, hate speech, fake accounts and spam over a period of 6 months (October 2017 – March 2018). This is a company first for Facebook and is a bid toward making the platform more transparent in the wake of the Cambridge Analytical Data scandal. However, while it’s a step in the right direction Guy Rosen, VP of Product Management, noted that there is ‘a lot of work still to do to prevent abuse1’.

Going through the data it’s quite shocking to see the amount of bad content they have taken down and while the content is of an unfavourable nature, it does show that Facebook is still one of the most used social platforms despite all the recent negative press coverage.

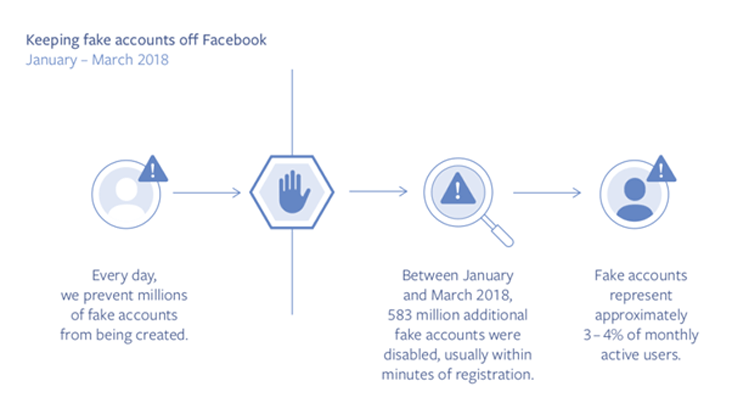

The majority of items removed in Q1 2018 were spam and fake accounts of which Facebook took down 837 million pieces of spam (almost all of these being flagged by Facebook itself before being highlighted by platform users) and 583 million fake accounts. However, the report goes on to state that 3 – 4% of accounts in Q1 2018 were still fake.

Some other key stats on violating content in Q1 include:

- 21 million pieces of adult nudity and sexual activity

- 3.5 million pieces of violent content (either removed or warning labels applied)

- 2.5 million pieces of hate speech

Facebook do use technology that checks content and as mentioned above in some areas it has an almost 100% success rate in pulling items down. Although, there are some areas where the technology still needs to be developed for example hate speech content doesn’t work as well and requires physical checks from the review teams (only 38% of hate speech content flagged by technology).

I for one welcome the more transparent approach being taken by Facebook and hope to see their continued efforts into make the platform a less hateful place to be on. As Guy Rosen summed up in his statement Facebook ‘believe that increased transparency tends to lead to increased accountability and responsibility over time, and publishing this information will push us to improve more quickly too. This is the same data we use to measure our progress internally — and you can now see it to judge our progress for yourselves.’

Sources:

- https://newsroom.fb.com/news/2018/05/enforcement-numbers/ accessed 16-05-2018 https://transparency.facebook.com/community-standards-enforcement